How to build an AI app using Tensorflow and Android

Audio : Listen to This Blog.

Abstract:

This article describes a case study on building a mobile app that recognizes objects using machine learning. We have used Tensorflow Lite. Tensorflow Lite machine learning (ML) is an open source library provided by Google. This article mentions a brief on Tensorflow Lite.

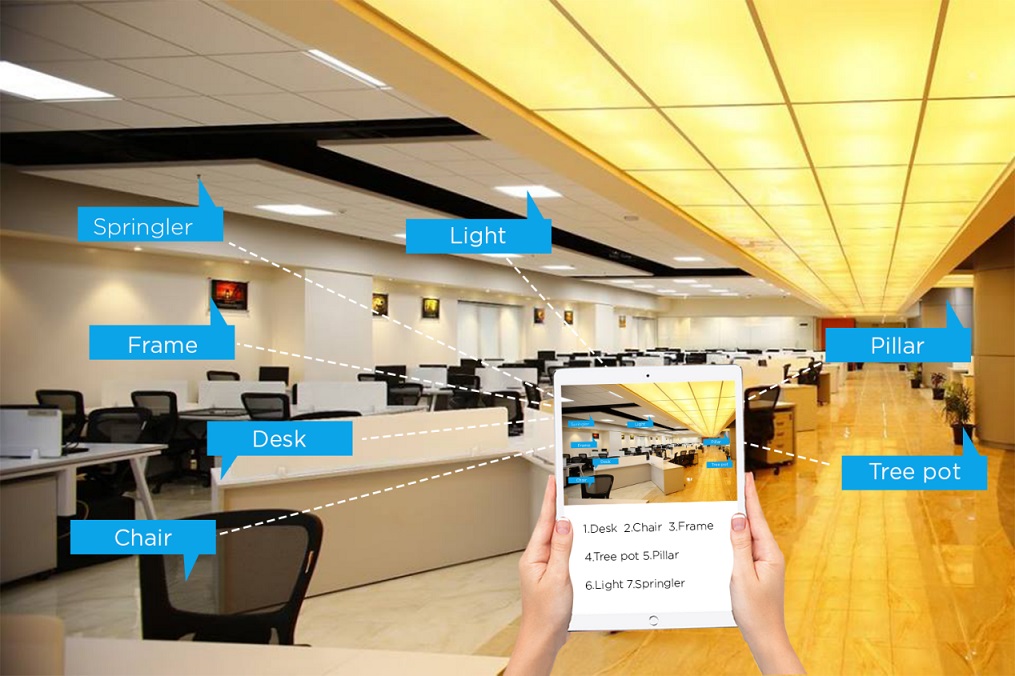

Object Identification in Mobile app (Creative visualization)

Tensorflow Lite:

Using Tensorflow, Implement the Machine Learning (ML) or Artificial Intelligence(AI)-powered applications running on mobile phones. ML adds great power to our mobile application. TensorFlow Lite is a lightweight ML library for mobile and embedded devices. TensorFlow works well on large devices and TensorFlow Lite works really well on small devices, as that it’s easier, faster and smaller to work on mobile devices.

Machine Learning:

Artificial Intelligence is the science for making smart things like building an autonomous driving car or having a computer drawing conclusions based on historical data. It is important to understand that the vision of AI is in ML. ML is a technology where computer can train itself.

Neural Network:

Neural network is one of the algorithms in Machine learning. One of the use cases of neural networks is, if we have a bunch of images, we can train the neural network to classify which one is the image of a cat or the image of a dog. There are many possible use cases for the combination between ML and mobile applications, starting from image recognition.

Machine Learning Model Inside our Mobile Applications:

Instead of sending all raw images to the server, we can extract the meaning from the raw data, then send it to the server, so we can get much faster responses from cloud services.

This ML model runs inside our mobile application so that mobile application can recognize what kind of object is in each image. So that we can just send the label, such as a cat, dog or human face, to the server. That can reduce the traffic to server. We are going to use Tensorflow Lite in mobile app.

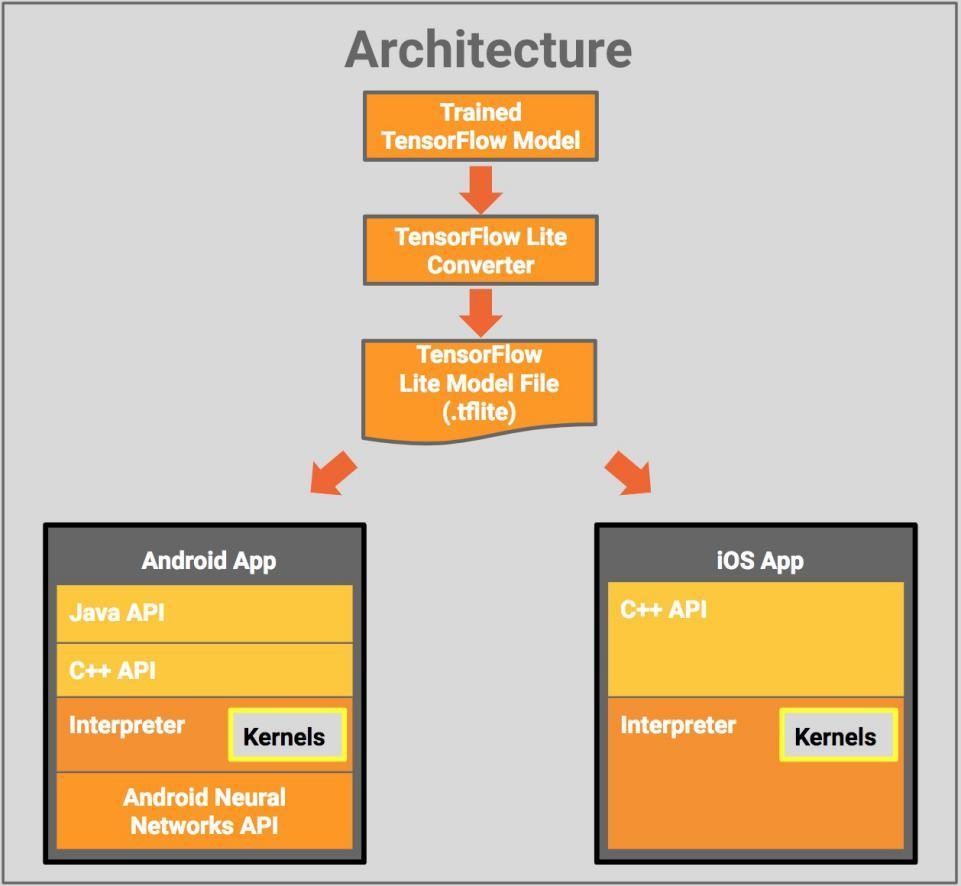

TensorFlow Lite Architecture:

DEMO: Build an application that is powered by machine learning — Tensorflow Lite in Android

https://www.youtube.com/watch?v=olQNKvMbpRg

Github link:

Pull below source code, import into Android Studio.

https://github.com/Chokkar-G/machinelearningapp.git

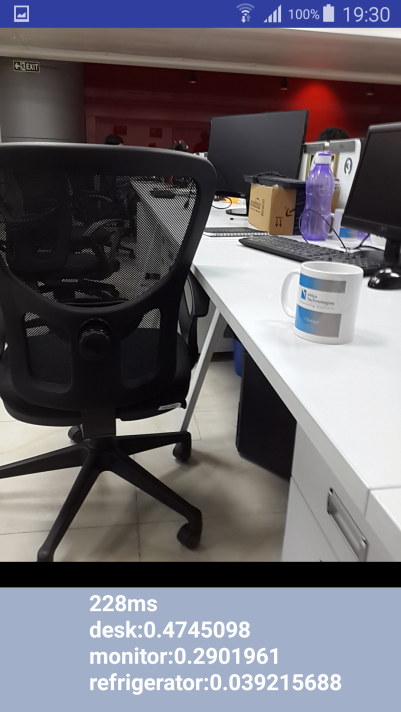

(Screencast)Tensorflow Lite object detection

This post contains an example application using TensorFlow Lite for Android App. The app is a simple camera app that classifies images continuously using a pretrained quantized MobileNets model.

Workflow :

Step 1: Add TensorFlow Lite Android AAR:

Android apps need to be written in Java, and core TensorFlow is in C++, a JNI library is provided to interface between the two

The following lines in the app’s build.gradle file, includes the newest version of the AAR

build.gradle:

repositories {

maven {url ‘https://google.bintray.com/tensorflow'}

}

dependencies {

// …compile ‘org.tensorflow:tensorflow-lite:+’

}

Android Asset Packaging Tool should not compress .lite or .tflite in asset folder, so add following block.

android {

aaptOptions {noCompress “tflite”noCompress “lite”}

}

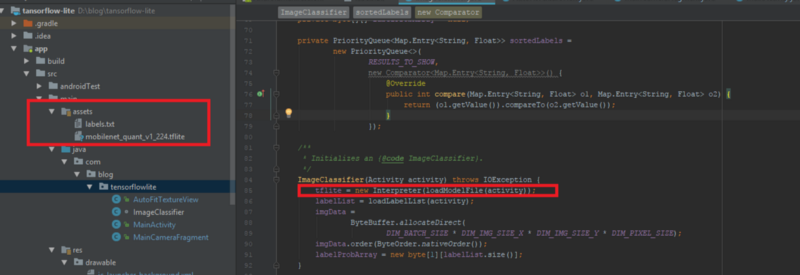

Step 2: Add pretrained model files to the project

a. Download the pretrained quantized Mobilenet TensorFlow Lite model from here

https://storage.googleapis.com/download.tensorflow.org/models/tflite/mobilenet_v1_224_android_quant_2017_11_08.zip

b. unzip and copy mobilenet_quant_v1_224.tflite and label.txt to the assets directory: src/main/assets

(Screencast) Placing model file in assets folder

Step 3: Load TensorFlow Lite Model:

The Interpreter.java class drives model inference with TensorFlow Lite.

tflite = new Interpreter(loadModelFile(activity));

Step 4: Run the app in device.

Conclusion:

Detection of objects like a human eye has not been achieved with high accuracy using cameras, i.e., cameras cannot replace a human eye. Detection refers to identification of an object or a person by training a model by itself. However, we do have great potential in MI. This was just a simple demonstration for MI. We could create a robot that changes its behavior and its way of talking according to who’s in front of it (a child/ an adult). We can use deep learning algorithm to identify skin cancer, or detect defective pieces and automatically stop a production line as soon as possible.

References:

https://www.tensorflow.org/mobile/tflite/

https://www.tensorflow.org/mobile/